Model Identification¶

Determining the optimal orders \((p,q)\) for a time series model involves balancing statistical fit with model parsimony.

1. ACF and PACF Behavior¶

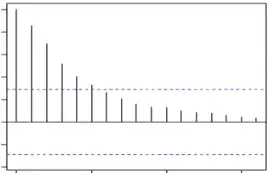

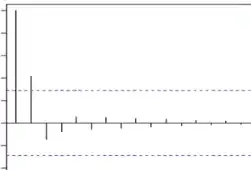

The primary visual method for identifying model orders is examining the Autocorrelation Function (ACF) and Partial Autocorrelation Function (PACF) plots.

- Significance: Most plots include 95% confidence bands; any correlation spikes extending beyond these bands are considered statistically significant.

- Complexity: For large or messy datasets, these plots become harder to interpret. When multiple models seem plausible, we follow the principle of parsimony—preferring the model with the fewest parameters that still achieves high accuracy.

2. Information Criteria¶

Information criteria provide a numerical way to compare models by penalizing for the number of parameters (\(M\)) to prevent overfitting.

Akaike's Information Criterion (AIC)¶

Introduced in 1974, AIC measures the trade-off between the goodness of fit and the complexity of the model.

$\(AIC = -2\log(\text{Maximum Likelihood}) + 2M\)$

Using the residual sum of squares (RSS) under the assumption that \(e_{t} \sim \mathcal{N}(0, \sigma_{e}^{2})\), it simplifies to:

$\(AIC = n \log \hat{\sigma_{e}}^{2} + 2M\)$

Schwarz's Bayesian Criterion (SBC/BIC)¶

Introduced in 1978, the Bayesian Information Criterion (BIC) imposes a heavier penalty on additional parameters as the sample size \(n\) increases, making it more conservative than AIC.

$\(SBC = n \log \hat{\sigma_{e}}^{2} + M \log n\)$

Hannan-Quinn Information Criterion (HQIC)¶

Developed in 1989, this criterion uses a penalty term involving a double logarithm. While statistically sound, AIC and SBC remain more popular due to their simplicity.

$\(HQIC = n \log \hat{\sigma_{e}}^{2} + 2M \log(\log n)\)$

3. Advanced Identification Techniques¶

Beyond standard ACF/PACF plots, several advanced tools help refine model selection:

- Sample Inverse Autocorrelation Function (SIACF): Often captures orders in seasonal models more effectively than PACF and is highly useful for detecting over-differencing.

- Extended Sample Autocorrelation Function (ESACF): Can tentatively identify orders for both stationary and non-stationary ARMA processes. Unlike criteria based on Maximum Likelihood Estimation (MLE), ESACF relies on iterated least squares estimates.

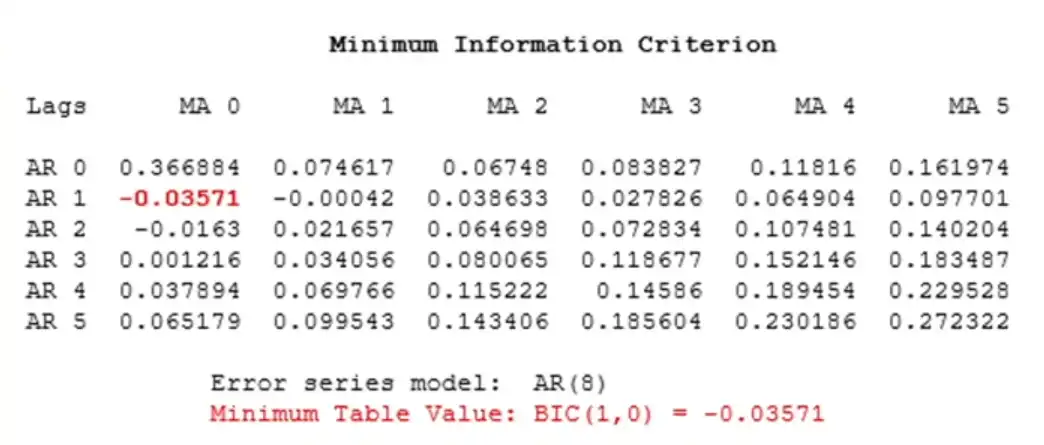

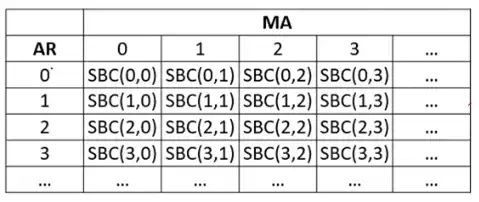

- Minimum Information Criterion (MINIC): This method automates selection by creating a grid of AIC or SBC values for various combinations of \(p\) and \(q\).

The goal is to identify the specific cell that yields the minimum value, which indicates the optimal orders for the model.